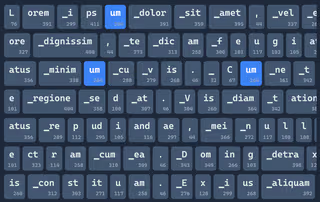

Byte-Pair Encoding Explained: The Algorithm Powering Modern LLM Tokenization

From a 1994 compression algorithm to the tokenizer powering modern LLMs: how Byte-Pair Encoding works and why it became the standard.

Hi, I'm

I'm a dedicated computer scientist passionate about web development and machine learning, committed to imparting knowledge through teaching.

Featured Project

Streamline your workflow with quick access to clipboard management, translations, emojis, and much more — all through one powerful, extensible launcher.

Featured Project

A fully custom DIY thermal printing camera I designed and build with a lot of additional features built into it.

From a 1994 compression algorithm to the tokenizer powering modern LLMs: how Byte-Pair Encoding works and why it became the standard.

Working through the edge cases of vocabulary design to understand why modern LLMs use subword tokenization.

Interactive tools and visualizations for teaching LLM architecture, gathered from preparing a workshop on LLMs.

Reasoning models promise AI that "thinks", but the reality is less magical and very expensive at the same time.